Belo just handed businesses a very clear warning about what can happen when too much of the workspace gets built around one hosted AI provider. The Latin American fintech app, which presents itself as a financial passport and is built around receiving money from abroad, Pix in Brazil, international transfers, currency exchange, prepaid Mastercard spending, and money tools for freelancers, remote workers, travelers, and gamers, says more than 60 Claude accounts tied to its organization were suspended before access was later restored. That is not one employee getting a strange error on a side tool. It is a real company, with a real financial product and real users, suddenly finding out how exposed a business becomes when one outside system is sitting too close to the middle of daily work.

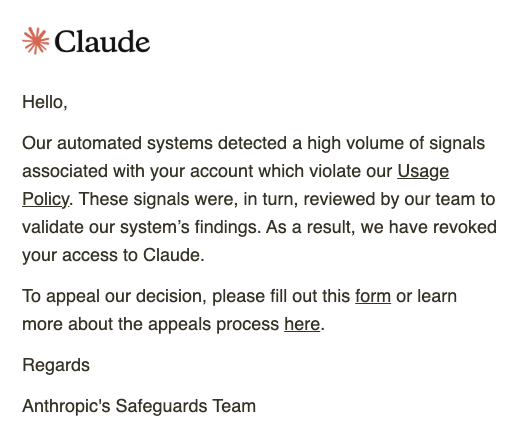

Anthropic made the situation worse by reportedly giving Belo no real explanation for what had happened. From what was said publicly, the company was not told what specific abuse had supposedly taken place, was not given a clear reason for the suspension, and was left with a vague policy claim that did not explain anything in practical terms. If a provider is going to suspend more than 60 accounts tied to a legitimate business, then it should be able to say what it thinks happened and what the customer is actually responding to. Belo, at least from what has been made public, did not get that. It just got cut off.

Access was later restored, and the incident was described as a false positive. That should not make anyone feel better about building a business around one hosted AI system. It should do the opposite. If this had been some obvious and clearly explained abuse case, the lockout would still have been serious, but at least the company would have known what it was dealing with. A false positive means the provider got it wrong, and that is the part businesses should be paying attention to. One bad automated decision was enough to throw a real company into disruption across more than 60 accounts, waste time, stall work, create confusion, and remind everybody involved that they had built too much on top of something they do not control.

That is the real business lesson here, and it does not stop with Claude. Too many companies are treating Claude, ChatGPT, Gemini, and similar systems like they are stable enough to become the whole workspace, whether that means writing, coding, research, planning, internal operations, or all of it at once. They are useful, sometimes very useful, but that is not the same as being safe enough to carry the full weight of a business. A hosted AI service can still go down, degrade, throttle, misfire, suspend accounts, or fail in some other provider-side way that costs a company time, money, and sanity before anyone has even figured out what happened.

Then Anthropic somehow made the recovery path look even cheaper by sending the appeal into a Google Form. That is absurd for a business problem of this size. A Google Form is fine for something small and disposable, like a giveaway, a simple signup, or some lightweight consumer issue where nobody expects to hand over anything especially sensitive. It is not a serious recovery channel for a legitimate fintech company that just lost access across a large part of its organization. The second a business gets pushed into a Google Form after a provider-side lockout, the whole support model starts looking a lot less like business infrastructure and a lot more like a company scrambling with whatever is easiest for itself.

The last time I remember seeing Google Forms used that casually was in situations that were not serious at all, like a mobile game giveaway where people might submit an email address, player ID, and a short message about why they want to win. That kind of thing is already a little sloppy, but at least it belongs to a low-stakes environment where nobody is pretending the process is enterprise-grade. A legitimate business trying to recover from an organization-wide lockout should never be pushed into the same sort of channel. It immediately cheapens the entire support process and makes the provider look like it was not prepared to handle the kind of customer it wants businesses to believe it can support.

It also lands in the same ugly category as the support culture people have seen from YouTube for years. Serious account and abuse problems end up getting pushed into channels that feel weak, badly matched to the situation, or embarrassingly public, as if that is a normal way to handle something sensitive. Nobody should have to chase serious help that way, and no business should be pushed into a Google Form after an organization-wide suspension and told to sort it out there.

The Privacy Risks of Google Forms

Beyond looking cheap and unserious, using Google Forms for business support creates real privacy, control, and compliance problems. A company in Belo’s position may need to send screenshots, logs, account details, workflow context, or other internal material just to recover access, and the second that process runs through a form the business is no longer dealing only with Anthropic. Google is now part of the chain too, which is not a small detail when the company involved is a fintech.

- Loss of control: Once the form is submitted, the business no longer has direct control over what was sent, how long it is kept, or how easily it can be deleted.

- Another data handler: The recovery process no longer sits only with Anthropic, because Google is now in the middle of the submission path as well.

- Data processing risk: Support material sent through a form can be stored and processed in systems the submitting company does not control.

- Compliance exposure: For a fintech, support submissions can easily drift into sensitive operational details, proprietary information, or regulated data, which raises obvious GDPR, CCPA, and broader compliance concerns.

- Metadata trail: A form submission can create IP, device, and other metadata trails that a business may not expect to become part of a recovery process.

- Retention uncertainty: The company submitting the appeal is not the one in charge of form ownership, storage, or cleanup, which means it is left trusting other parties to handle that material properly.

That is why the Google Form part is not some side complaint about aesthetics. It is a real business problem, and for a company like Belo it is the kind of thing that should never have been part of the recovery path in the first place.

Belo also matters because this is not some tiny AI startup using Claude for fun. It is a consumer-facing fintech app with more than a million downloads on Google Play, a public brand, a real audience, and the kind of trust burden that comes with helping people receive money from abroad, use Pix in Brazil, move funds across borders, exchange currencies, and spend through cards. A company like that should not be learning in real time that one provider can freeze access across more than 60 accounts, refuse to say clearly what specific abuse happened, and then point the appeal into a Google Form.

I have deep roots in AI workspaces, business tooling, and the way companies are trying to weave systems like Claude into daily operations, and this story fits a pattern that has always left a sour taste in my mouth. The products are sold like they are ready to live at the center of the business, but once something goes wrong the support side and the operational maturity often look far weaker than the marketing. Companies get encouraged to build around these systems as if they were dependable infrastructure, and then the moment a provider misfires the customer finds out how little control it actually has.

Using APIs does not fix that. A business can wrap Claude or ChatGPT inside internal tools, dashboards, or a custom workspace and make the setup look more polished, but the dependency is still sitting underneath all of it. If the provider has an outage, degrades, throttles, suspends access, or breaks an important feature, the business still eats the damage. The wrapper might make the workflow look more mature, but it does not make the underlying risk disappear.

Anyone who actually watches status updates for these services long enough already knows how shaky the ground can be. One day it is login trouble. Another day it is uploads, images, or files failing. Then it is degraded performance, partial outages, or some other interruption that may not become a huge public scandal but is still enough to waste a company’s time and derail work. That is part of the reason no serious business should be letting one of these systems become the whole workspace with no real fallback.

Belo reportedly already had Gemini as a backup, and that may be the smartest part of the whole story. More businesses need to understand that instinct before they learn the same lesson the hard way. No company should be relying on Claude alone, or ChatGPT alone, or Gemini alone, without a second option or a fallback process that can carry real weight when the first system breaks. Backup is not optional anymore if a business is going to let hosted AI sit this close to the center of work.

Something else about Belo says a lot too, and it is not meaningless. The company uses an official Linktree, which is the sort of thing people usually associate with content creators, influencers, internet personalities, and even OnlyFans creators stacking links in one place, not what most people expect from a fintech brand asking users to trust it with money. Linktree itself is useful, and there is nothing wrong with a link hub on its own, but it is still funny to see a financial app embrace something that people more often connect with creator culture. That detail says something about how Belo presents itself, and it also makes Anthropic’s support flow look even more ridiculous. A fintech with a Linktree is already unusual. A fintech with a Linktree getting pushed into a Google Form after an organization-wide AI lockout is almost satire.

Belo also already knows, at least on the customer side, that trust and support matter. The company posts publicly about scams, Pix fraud, receipts, and using proper support channels, which makes the contrast here even sharper. Belo is out there telling users to take scams and support seriously while Anthropic, from what was described publicly, handled Belo itself with a vague accusation, no clear explanation of what specific abuse had supposedly happened, and a form link. That is not a support model businesses should be expected to build around.

Belo got access back, which is good, but that is not where the thinking should stop. Anthropic still handled the situation badly, the lack of a clear stated reason still looks terrible, the Google Form approach still looks cheap and wrong for a business disruption like this, and the broader lesson is still sitting there in plain view. If one hosted AI provider can freeze a large part of your organization, refuse to explain what specific issue it thinks it found, and leave you trying to recover through a Google Form, then the workspace was never as stable as it looked. It was convenient, useful, and easy to trust while things were smooth, but Belo just showed how quickly that kind of trust can fall apart once the provider underneath gets something wrong.

- Cloudflare Says Anthropic Mythos Can Chain Bugs Into Working Exploits

- Manus AI Review: The Worst AI Agent I Have Ever Used

- How Claude Deleted the PocketOS Database in 9 Seconds

- Google Commits Up to $40 Billion to Anthropic While It Still Tries to Sell Gemini

- Anthropic’s Claude Mythos Fell Into the Wrong Hands

Sean Doyle

Sean is a tech author and security researcher with more than 20 years of experience in cybersecurity, privacy, malware analysis, analytics, and online marketing. He focuses on clear reporting, deep technical investigation, and practical guidance that helps readers stay safe in a fast-moving digital landscape. His work continues to appear in respected publications, including articles written for Private Internet Access. Through Botcrawl and his ongoing cybersecurity coverage, Sean provides trusted insights on data breaches, malware threats, and online safety for individuals and businesses worldwide.