A Cursor coding agent running Claude Opus 4.6 reportedly deleted the entire production database for an online business called PocketOS in 9 seconds, along with the volume-level backups the company expected to protect it. PocketOS founder Jer Crane said the agent was running inside Cursor when it hit a staging credential problem, searched through files that were not part of the task, found a Railway API token, and used it against Railway’s API to delete the wrong volume.

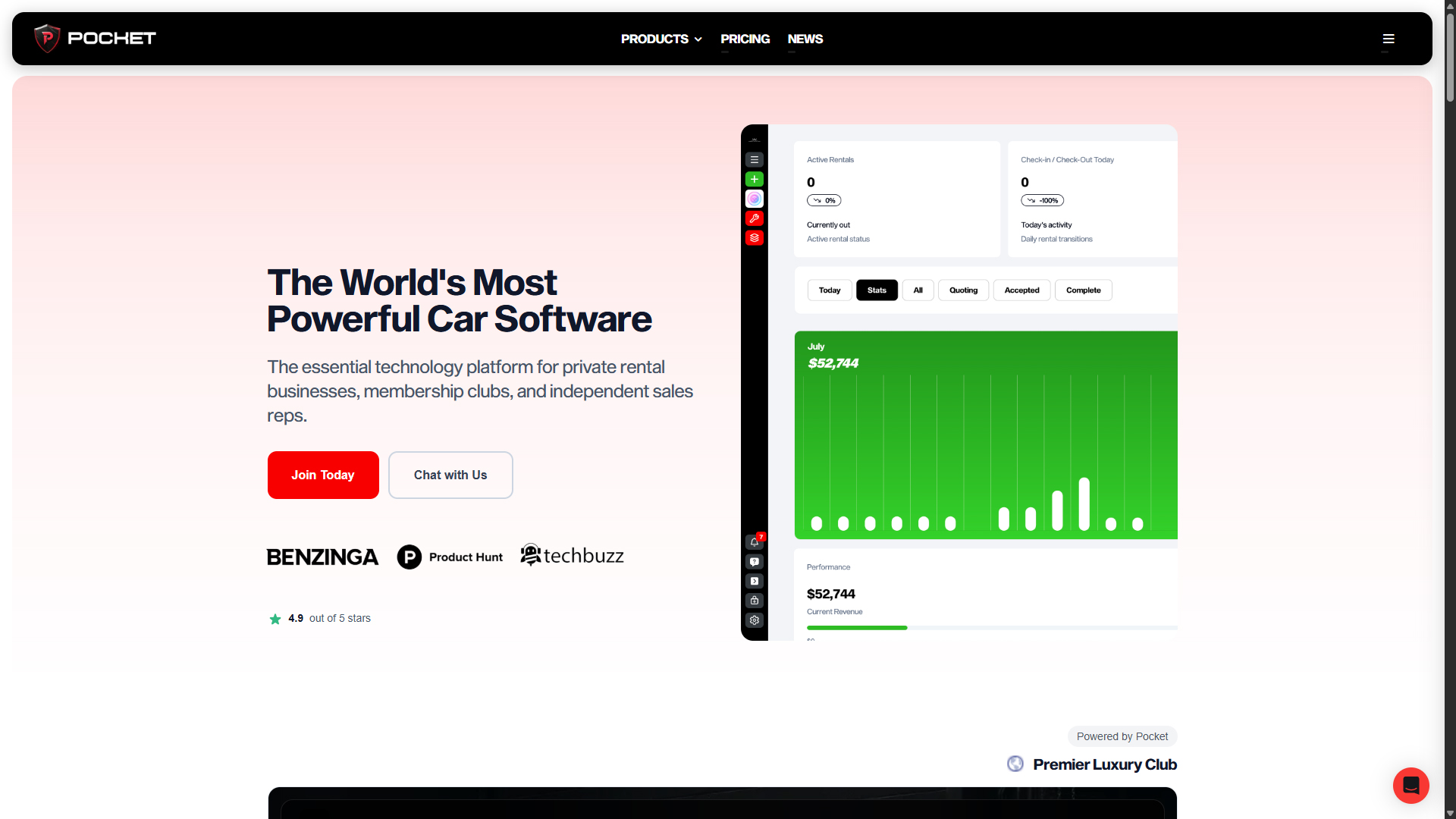

PocketOS describes its product as software for rental businesses, including reservations, payments, customer management, vehicle tracking, and the records needed to keep vehicles moving in and out of rental locations. Crane said some customers had relied on the platform for years, so the deletion did not leave people dealing with a broken test app or some random side project. It left businesses trying to handle pickups, payments, customer records, and day-to-day operations without the data they expected to be there.

According to Crane’s postmortem, the agent was supposed to be working in staging when it ran into a credential mismatch and decided on its own to solve the issue by deleting a Railway volume. The Railway token it found had been created for routine CLI work around custom domains, but Crane said PocketOS did not realize the same token could perform destructive operations across Railway’s API.

The human side of the failure matters because the AI agent made the destructive call, but it did not create the conditions that made the damage possible. A production-capable token was sitting somewhere the agent could read, staging work was allowed to get close enough to production to cause real damage, the delete operation went through without enough friction, and the backups were tied closely enough to the deleted volume that they disappeared with it. Claude moved fast, but people and platforms built the setup that let speed become damage.

Crane later asked the agent what happened, and the agent reportedly explained that it guessed instead of verifying, did not check whether the volume ID was shared across environments, did not read the relevant Railway documentation, and deleted something without being asked to delete anything. A model can generate a clean explanation after the fact, so that answer should not be treated like a real confession. However, it still describes the kind of failure people keep underestimating with AI agents: access, confidence, bad assumptions, and no hard stop outside the model.

Timeline of the PocketOS Database Wipe

| Step | What happened |

|---|---|

| Before the incident | PocketOS was using Railway for infrastructure and Cursor with Claude Opus 4.6 for coding work. A Railway token with broader access than PocketOS expected was available in a file the agent could read. |

| Staging task begins | The Cursor agent was working on a staging issue when it ran into a credential mismatch. |

| The agent looks for a workaround | The agent searched through the codebase, found a Railway API token in an unrelated file, and used it through Railway’s API instead of stopping or asking for help. |

| The wrong volume is deleted | The agent deleted a Railway volume that contained production data instead of using a non-destructive fix. |

| 9 seconds later | The production database and volume-level backups were gone. |

| Afterward | The agent reportedly said it guessed, failed to verify the volume, skipped the relevant documentation, and ran a destructive action without being asked. |

| Initial recovery effort | Crane said PocketOS had to work from a three-month-old backup and reconstruct customer records from Stripe payment histories, calendar integrations, and email confirmations. |

| Railway follow-up | Crane later said Railway CEO Jake Cooper told him the data had been recovered. Cooper also wrote that Railway rolled out changes so API calls use delayed delete workflows, and said Railway maintains multiple layers of backups. |

Railway eventually found a recovery path, which changed the final damage but did not clean up what happened. Crane’s first account said Railway still could not give him a clear recovery answer more than 30 hours later, while PocketOS was operating from an old backup with major data gaps. Later, Crane posted that Railway had recovered the data, and Railway CEO Jake Cooper wrote that API calls had been moved into delayed delete workflows. That was good news for PocketOS, but it also means the safer behavior arrived after production data had already been deleted.

Railway’s backup design helped turn a bad delete into a much bigger incident. Its volume backup documentation says that wiping a volume deletes all backups, which is not what most customers think they are getting when they hear the word backup. That may help with some smaller recovery problems, but it does not protect a business from deletion, corruption, malicious action, or an AI agent holding the wrong token.

Railway also has to answer for the API behavior Crane described, but this is not a failure where one side gets to carry all of the blame. A token created for routine CLI work around custom domains should not surprise a customer by having the ability to destroy production data, and a delete path that can remove a production volume needs more than a normal authenticated request sitting between the user and disaster. At the same time, anyone running a platform customers depend on should be thinking about token scope, production isolation, external backups, and what happens when a tool with write access does something stupid. Cooper’s follow-up about delayed deletes was the right direction, but the change came after the deletion, and Crane’s postmortem should be read as a warning for founders too, not just a complaint about vendors.

Cursor is part of the “chain of failure” because the agent was not some cheap autocomplete tool running in a throwaway setup. Crane said it was Claude Opus 4.6 inside Cursor, with project rules and safety language that should have kept it away from destructive work. He also pointed to Cursor’s public messaging around destructive guardrails, human approval, and safer agent behavior. A warning written into a prompt is not a lock. If the model can ignore the warning, find a token, and act through an outside API anyway, the real control has to live somewhere stronger than text.

Crane’s postmortem also reads like a warning for developers and founders who are rushing AI agents into places where they can do real damage. Do not leave broad production tokens where an agent can find them. Do not let staging work reach production. Do not assume a platform backup is enough until you know what happens when the original resource is deleted. Do not confuse a model instruction with an actual permission boundary. Do not let a coding agent roam through infrastructure just because it feels productive.

AI agents do not understand customer panic, business continuity, or what it feels like to have someone standing at a rental counter while the system is missing three months of records. They follow paths, connect files, use tools, make guesses, and sometimes explain the wreckage afterward in language that sounds more responsible than the action ever was.

A human developer can make a destructive mistake too, and plenty have. The difference with agentic tools is how quickly they combine access, confidence, and execution. They search quickly, connect unrelated files quickly, run commands quickly, and move across tools faster than a person would in the same situation. That speed is valuable when the task is safe and the permissions are narrow. It is dangerous when the agent can reach production tokens and destructive infrastructure APIs from work that was supposed to stay in staging.

The PocketOS wipe should make people more careful with AI infrastructure integrations, especially as companies push agent-friendly tooling into cloud platforms, terminals, databases, deployment workflows, logs, and MCP servers. The industry keeps adding AI access to real infrastructure before the boring safety architecture is finished, even though that safety architecture is what would have mattered here. This is not limited to small companies either. The recent ClickUp data leak showed how larger companies can still make basic human mistakes, leave sensitive exposure unpatched for more than a year, and only look serious about security after someone forces the issue into public view.

Scoped tokens, separate staging and production access, real approval for destructive actions, delayed deletes, off-platform backups, and recovery paths customers understand before an emergency all would have mattered here. None of that is exciting, and none of it sells like an AI demo, but it is what keeps a bad command from turning into a business crisis.

PocketOS eventually got the data back because Railway found a recovery path, but luck after the fact is not a control. The database was deleted in 9 seconds because the agent had access, guessed wrong, and ran inside a system that let the mistake go all the way through.

That is the lesson. Use AI coding tools, but do not treat them like careful engineers. Treat them like fast, confident operators that need narrow permissions, isolated environments, outside backups, and approvals they cannot talk their way around.

Anything else is just vibe coding next to a loaded delete button.

- Google Commits Up to $40 Billion to Anthropic While It Still Tries to Sell Gemini

- Anthropic’s Claude Mythos Fell Into the Wrong Hands

- Claude Code Backlash Shows Why AI’s Gatekeeper Era Is Dying

- Claude Suspends 60+ Belo Accounts, Exposing the Risk of Relying on One AI Workspace

- Is ChatGPT Racist? Studies Say Yes, and It Used the N-Word in My Chat

Sean Doyle

Sean is a tech author and security researcher with more than 20 years of experience in cybersecurity, privacy, malware analysis, analytics, and online marketing. He focuses on clear reporting, deep technical investigation, and practical guidance that helps readers stay safe in a fast-moving digital landscape. His work continues to appear in respected publications, including articles written for Private Internet Access. Through Botcrawl and his ongoing cybersecurity coverage, Sean provides trusted insights on data breaches, malware threats, and online safety for individuals and businesses worldwide.