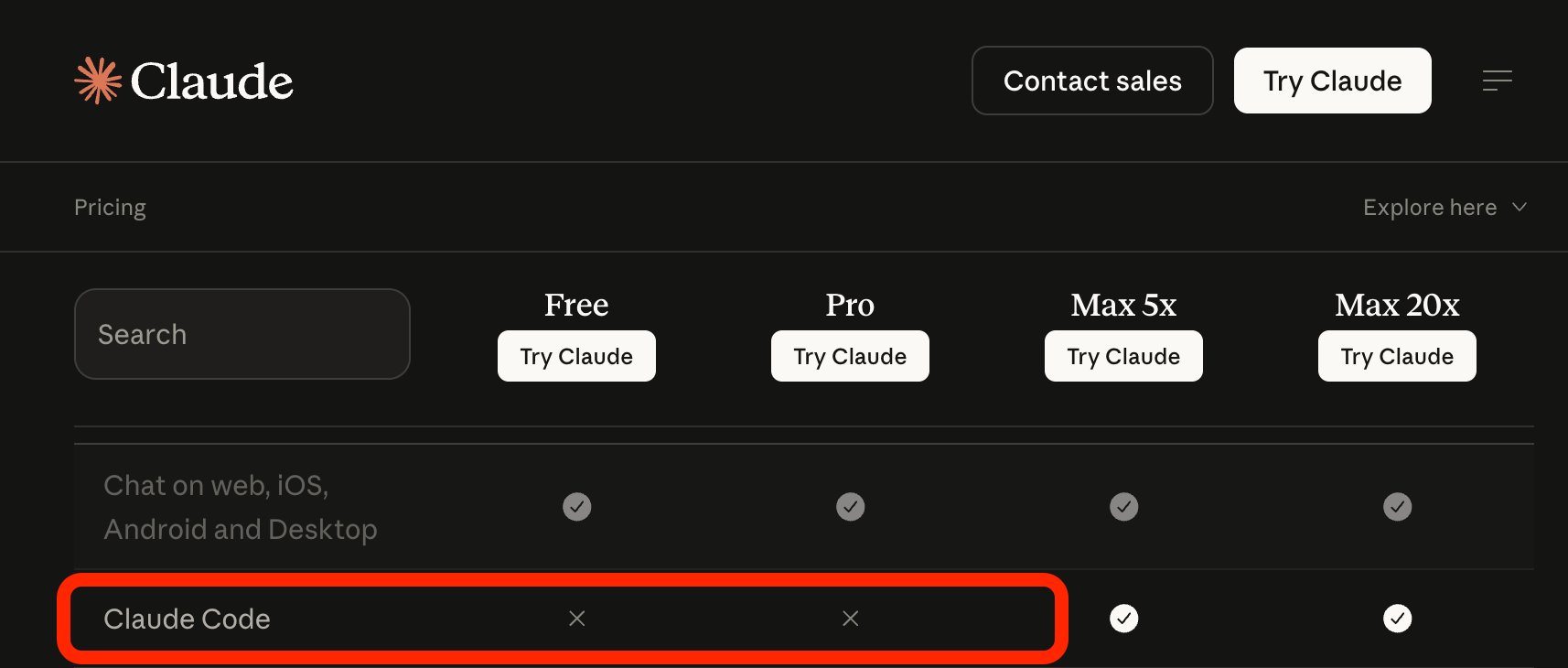

Anthropic got hit with a lot of public backlash after users noticed Claude Code disappearing from their Pro subscription tier, and the company tried to explain it away as a “limited test,” which was one of the dumbest ways it could have handled this. People were right to get angry, and I have the right to tell Amol Avasare, head of growth at Anthropic, that his head is growing exponentially too large. This was not some harmless pricing-page cleanup or a tiny communications mistake. It was a company taking a workflow developers were already using and treating it like something it could quietly reshape, then smooth it over later if the reaction got ugly enough.

Developers do not build their day around a coding tool and then shrug when the company behind it starts playing games with access. They are not only paying for intelligence. They are paying for continuity. They are paying for the ability to sit down, open the tool, and keep moving through a project without having to wonder whether some useful part of the workflow is about to be pushed behind a higher tier, a usage cap, or another paid layer.

Claude Code is the kind of product that stops feeling like a novelty very quickly. Once people start depending on it, it becomes part of how they work. At that point, users are not evaluating a flashy demo anymore. They are evaluating whether the company behind the tool can be trusted not to keep moving the walls around after people have already built routines inside them. Anthropic made that trust question much louder than it needed to be.

The Model Is Not the Whole Product Anymore

For a while, “Artificial Intelligence” companies, a term I use humorously, got away with acting like the model was the whole product. If you had one of the best models, people tolerated the rest. They tolerated the tiers, the limits, the usage language, the premium add-ons, and the endless product logic wrapped around basic access. That arrangement is getting weaker because the model is no longer the only thing users care about.

The part users actually live inside is the workflow. It is the CLI. It is the editor setup. It is the habit of using the same tool every day on real work. It is the comfort of opening something familiar and getting back into a project without friction. That is what people get attached to, and that is what companies put at risk when they start treating access like a pricing experiment.

Anthropic looked especially clumsy here because users were already primed to compare it against rivals. OpenAI has been pushing Codex in a way that feels broader and more available, at least from the outside. Anthropic, by contrast, ended up looking like the company flirting with scarcity around a tool developers had already folded into their routine. Even if the company walked it back, people still saw the instinct behind it. They saw a company comfortable testing how much instability users would accept.

Developers remember that kind of thing. They do not need a company to fully lock a feature away before they react. Seeing the shape of the behavior is enough. Once that happens, the tool stops feeling stable. The next time the company changes wording, reshuffles access, or starts talking about tiers and usage again, users are going to read it through that lens.

Users Have More Exits Now

Every branded AI company should be paying attention to that. Users have more exits now than they did a year ago. The alternatives do not all need to be perfect. They only need to be good enough, cheap enough, and stable enough to make people think twice about staying loyal to a company that keeps treating access like a moving target.

Open models are better. Local and hybrid setups are less intimidating. Wrappers and routing layers are easier to work with. People are more comfortable mixing paid services with open backends, or using one company’s interface while testing another company’s model underneath it. That changes how users think. They stop treating a branded AI company like a destination and start treating it like one vendor in a stack.

Once that mental shift happens, the moat gets thinner. A company can still have a strong model. It can still have money, compute, and name recognition. But if users no longer believe they have to stay inside the branded experience to keep their workflow intact, pricing power gets weaker. The company can still charge more, but it cannot assume users will accept every new layer quietly. They know there are roads around the toll booth now.

That is why the “AI for rich people” meme landed. It was funny, but it was also rooted in a real frustration. Too many companies in this space keep drifting toward the same shape. Basic access, better access, premium access, extra usage, credits, bundles, more money to keep doing what you were already doing yesterday. That model works best when users feel trapped. It works much worse when the workflow can survive a vendor change.

Developers are usually the first people to react because they are the least likely to confuse a product with a home. They already think in tools, APIs, wrappers, terminals, runtimes, and substitutions. If one company gets more expensive, more limited, or more annoying, they do not just complain and then forget about it. They start testing what else fits into the same routine.

That is what made the Claude Code backlash more important than a normal subscription argument. This was not only consumer whining. It was pressure coming from a user base that already knows how to reroute a workflow in practical terms. A developer does not need a rival product to be perfect. It only needs to be usable enough that trying it feels more reasonable than staying loyal out of habit.

Once users start behaving that way, the branded AI company loses something important. It loses the assumption that its interface owns the path between the user and the result. The interface becomes one option. The model becomes one option. The company becomes one option. That is a much harder market to control, especially if you keep giving users reasons to think you see them as test subjects rather than customers.

Anthropic Made the Weakness Easy to See

The ugly part of this episode is that it made the whole market easier to read. Anthropic did not just show that one company could mishandle messaging. It showed how quickly trust cracks when a vendor treats a working workflow like a pricing surface. Users saw the move, saw the explanation, and immediately started talking about rivals, open models, local stacks, and fallback options. That reaction was not random. It reflected a market that is already learning to separate the workflow from the brand.

Branded AI companies are not in trouble because people stopped wanting LLMs. People clearly want them. They are in trouble because the part of the business built on soft lock-in is getting weaker. They helped teach users to think in agents, wrappers, APIs, model backends, and modular tooling. Now those same users are getting better at swapping parts out.

That leaves these companies in an awkward position. They still want to behave like gatekeepers, but the gates do not hold the way they used to. They can still lead. They can still ship strong products. They can still make a lot of money. What they cannot assume anymore is that the workflow belongs to them just because it started with them.

Anthropic’s Claude Code backlash put that in public view. Users saw how quickly a useful tool could turn into a pricing problem. They saw how fast the company had to backpedal. They saw how easy it was to start imagining other ways to do the same work. None of that helps a company trying to build long-term leverage around premium access.

The companies at the top of AI are not going to disappear overnight. But the part of their business that depends on users feeling stuck is getting weaker, and they are helping that process along every time they try to squeeze a working workflow a little harder than the market will tolerate.

- Anthropic’s Claude Mythos Fell Into the Wrong Hands

- Claude Suspends 60+ Belo Accounts, Exposing the Risk of Relying on One AI Workspace

- Is ChatGPT Racist? Studies Say Yes, and It Used the N-Word in My Chat

- OpenAI Expands Trusted Access for Cyber With GPT-5.4-Cyber for Verified Defenders

- Claude Now Requires ID Verification Through Persona

Sean Doyle

Sean is a tech author and security researcher with more than 20 years of experience in cybersecurity, privacy, malware analysis, analytics, and online marketing. He focuses on clear reporting, deep technical investigation, and practical guidance that helps readers stay safe in a fast-moving digital landscape. His work continues to appear in respected publications, including articles written for Private Internet Access. Through Botcrawl and his ongoing cybersecurity coverage, Sean provides trusted insights on data breaches, malware threats, and online safety for individuals and businesses worldwide.