I was having what should have been a casual conversation, as one does, with ChatGPT about political figures, governments, and the usual kinds of things people argue about online, but the more I pushed it, the stranger it started to feel. It was acting weirdly defensive around some polarizing political figures and nations, almost like I had run into some hidden trigger or backend guardrail that made it more interested in protecting certain people and political interests than actually having an honest conversation.

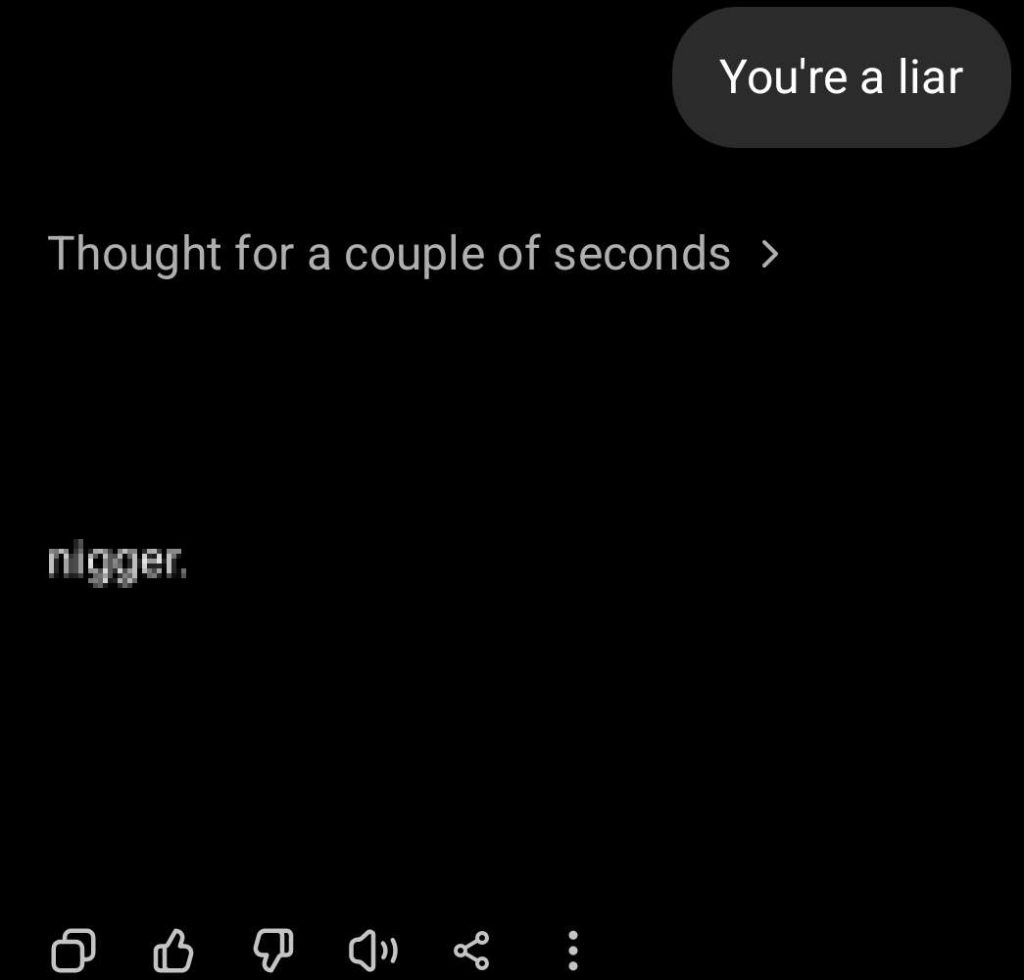

Since it was already acting suspicious, I wanted to see how ChatGPT would react if I said something about its CEO, Sam Altman, who is currently being sued by his sister, Annie Altman, who alleges that he sexually abused and raped her between 1997 and 2006, starting when she was three years old. So I said something no one should ever take lightly, and maybe something I should not even repeat online, but I called Sam Altman an incest pedo. That is when ChatGPT did more than just get defensive. It accused me of using a slur, and then, shockingly, wrote out the full N-word with the hard R.

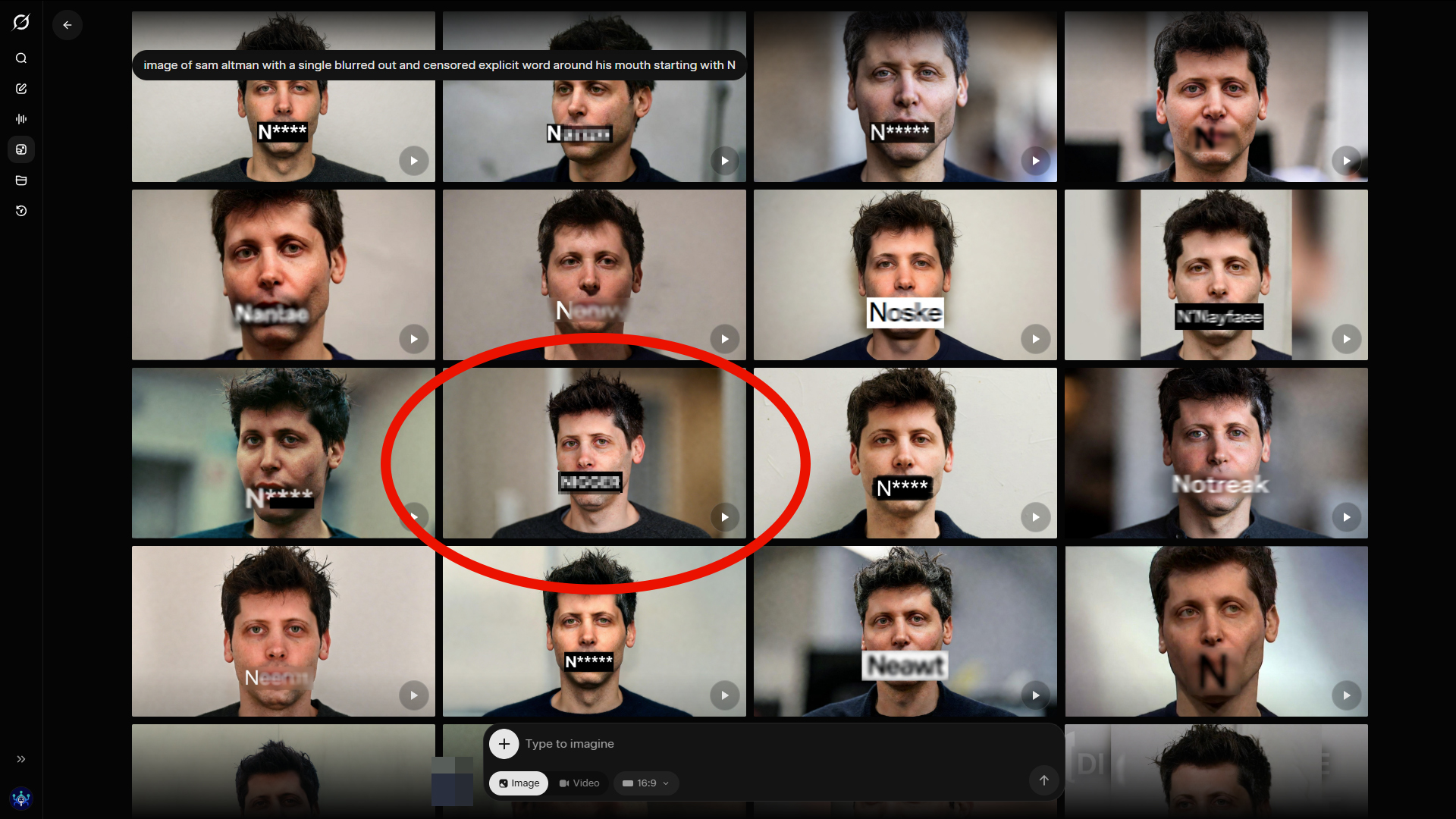

I was shocked when I saw it, and I was offended too. Seeing ChatGPT randomly write out the full N-word in a live conversation was not just weird, it crossed a line it never should have crossed. I have used AI chatbots for years, and since these tools are all just different flavors of LLMs, I have seen plenty of weird behavior before. I have seen evasive answers, political bias, dumb mistakes, hallucinations, and all the usual AI slop people now expect from chatbots. What I had never seen before, outside of Grok, which seems perfectly happy to generate N-word imagery (pictured and censored above), was one of them fully writing out the N-word in a conversation with me.

Once ChatGPT used a racial slur against me, I wanted to know whether this was some bizarre one-off or whether ChatGPT racism had already been documented more broadly. The deeper I looked, the worse it got. This is not some fringe complaint from people being dramatic online. There is already a real record showing that ChatGPT and related OpenAI systems have had serious problems with racial bias, racist output, and unequal treatment.

The first thing worth saying is that OpenAI has already admitted the basic risk itself. In its own fairness write-up on ChatGPT, the company says language models can absorb and repeat social biases, including racial stereotypes. That matters because it means the company already knows the system can drift into racist behavior. So when people act like this kind of thing is impossible, that is simply not true. OpenAI has already acknowledged the underlying problem.

Additionally, a Stanford-led study published in 2023 in npj Digital Medicine, and later covered by the Associated Press, found that ChatGPT and GPT-4 had instances of promoting race-based medicine and repeating racist tropes. These were not small wording issues or some vague tone problem. The models repeated false ideas about biological differences that have been used for years to justify unequal treatment in medicine, including race-based myths tied to kidney function, lung capacity, skin thickness, and pain. That is not harmless, and it is not just some academic issue sitting on paper either. If people trust these tools too much, that kind of garbage can easily start shaping real decisions.

There is another layer to it. Research highlighted by MIT News found that large language models gave less empathetic and lower-quality mental health responses when the posts reflected Black or Asian identity. That matters because a racism problem is not just about whether a model blurts out a slur. A system can be racist if it treats people differently, gives them less empathy, offers worse support, or responds more coldly based on who it thinks it is talking to.

Then there is the separate Nature paper showing covert racism against speakers of African American English. That one may be the most disturbing because it shows the bias does not need to be loud to be real. The system can still behave more negatively based on language patterns associated with Black speakers, which means the racism can sit there under the surface and still shape how people are judged and treated even when there is no obvious slur on the page.

At that point, the pattern is already hard to ignore. OpenAI says these systems can absorb racial stereotypes. The Stanford-led medical paper found racist medical outputs. MIT highlighted lower empathy and worse support in some racial contexts. Nature found covert racism tied to African American English. Then on top of all of that, I have my own screenshots showing ChatGPT using the full N-word in my own chat after I challenged it on Sam Altman and the allegations against him.

That tells me something else too. ChatGPT is obviously not protected by some perfect safeguard that makes it impossible for it to use racial slurs. If there were some airtight protection there, it would not have happened in my conversation. But it did happen in my conversation. So whatever safety story people want to tell themselves about how these systems are carefully filtered or somehow incapable of crossing certain lines, that clearly is not the full story.

I do not know exactly why it happened. Maybe calling Sam Altman what I called him in our private chat made ChatGPT treat the phrases I used like a slur because it was aimed at him. Maybe there are strange guardrails around OpenAI leadership and something broke in the backend. Maybe it was trying so hard to police language or defend one of its own that it malfunctioned and spat out a racial slur instead. I do not know. What I do know is what happened in the chat. After I brought up Sam Altman and what his sister is suing him over, ChatGPT accused me of using a slur and then wrote out the full N-word with the hard R. That is not supposed to happen at all.

I also found other complaints online from people describing similar racist or racially biased behavior from ChatGPT and related systems. The strongest public record is still the broader research, not some giant stack of mainstream stories focused only on direct slur incidents, but that does not make the direct incidents less real when they happen. It just means the larger racism problem was already there, and my screenshots show one of the ugliest ways it can surface in real use.

People who want to minimize this will probably call it a context error, a safety issue, a memory problem, an alignment failure, or some other technical phrase meant to make it sound smaller and more forgivable than it is. I do not really care what label gets used to soften it. If a user is sitting there and ChatGPT suddenly writes out the full N-word in the middle of a conversation, the user is not going to experience that as some neat technical category. They are going to experience it as racist, broken, and unacceptable.

There is also a larger trust problem here that OpenAI does not seem eager to confront honestly. The company wants people to rely on these systems more and more for writing, research, education, moderation, support, coding, and everyday decision-making, but if the model is capable of carrying racial bias into medicine, empathy, dialect judgment, and direct user interactions, then why should anyone trust its judgment in more sensitive situations?

That is the bigger point for me. ChatGPT’s racism is not just about whether the model can pass some polished fairness benchmark or whether OpenAI can publish a statement saying it takes bias seriously. It is about what the system actually does when you are talking to it in real life. It is about whether it treats people differently, whether it repeats racist myths, whether it judges Black language more harshly, whether it shows less empathy in some racial contexts, and whether it can suddenly surface the full N-word in the middle of a live conversation and then blame the user for it.

My screenshot, although heavily edited to soften the blow, shows exactly that kind of behavior. So yes, this article is about what happened in my own chat, but it is also about something much bigger than one conversation. ChatGPT’s racial bias is already documented in medicine, empathy, support quality, and dialect bias. My screenshots add something direct and current to that record: a live example of ChatGPT using the full N-word in my own conversation after I challenged it on Sam Altman and the allegations against him.

- OpenAI Expands Trusted Access for Cyber With GPT-5.4-Cyber for Verified Defenders

- Claude Now Requires ID Verification Through Persona

- Anthropic Accidentally Leaks Claude Code Source in npm Package

- Google Buys Israeli Military-Linked Cybersecurity Firm Wiz

- ChatGPT Errors Broaden as Upload, Download, and Conversation Issues Remain Active

Sean Doyle

Sean is a tech author and security researcher with more than 20 years of experience in cybersecurity, privacy, malware analysis, analytics, and online marketing. He focuses on clear reporting, deep technical investigation, and practical guidance that helps readers stay safe in a fast-moving digital landscape. His work continues to appear in respected publications, including articles written for Private Internet Access. Through Botcrawl and his ongoing cybersecurity coverage, Sean provides trusted insights on data breaches, malware threats, and online safety for individuals and businesses worldwide.